Data Is the Moat

The models are commoditizing. The harnesses are commoditizing. The thing that isn't is the domain knowledge, and the engineers who carry it.

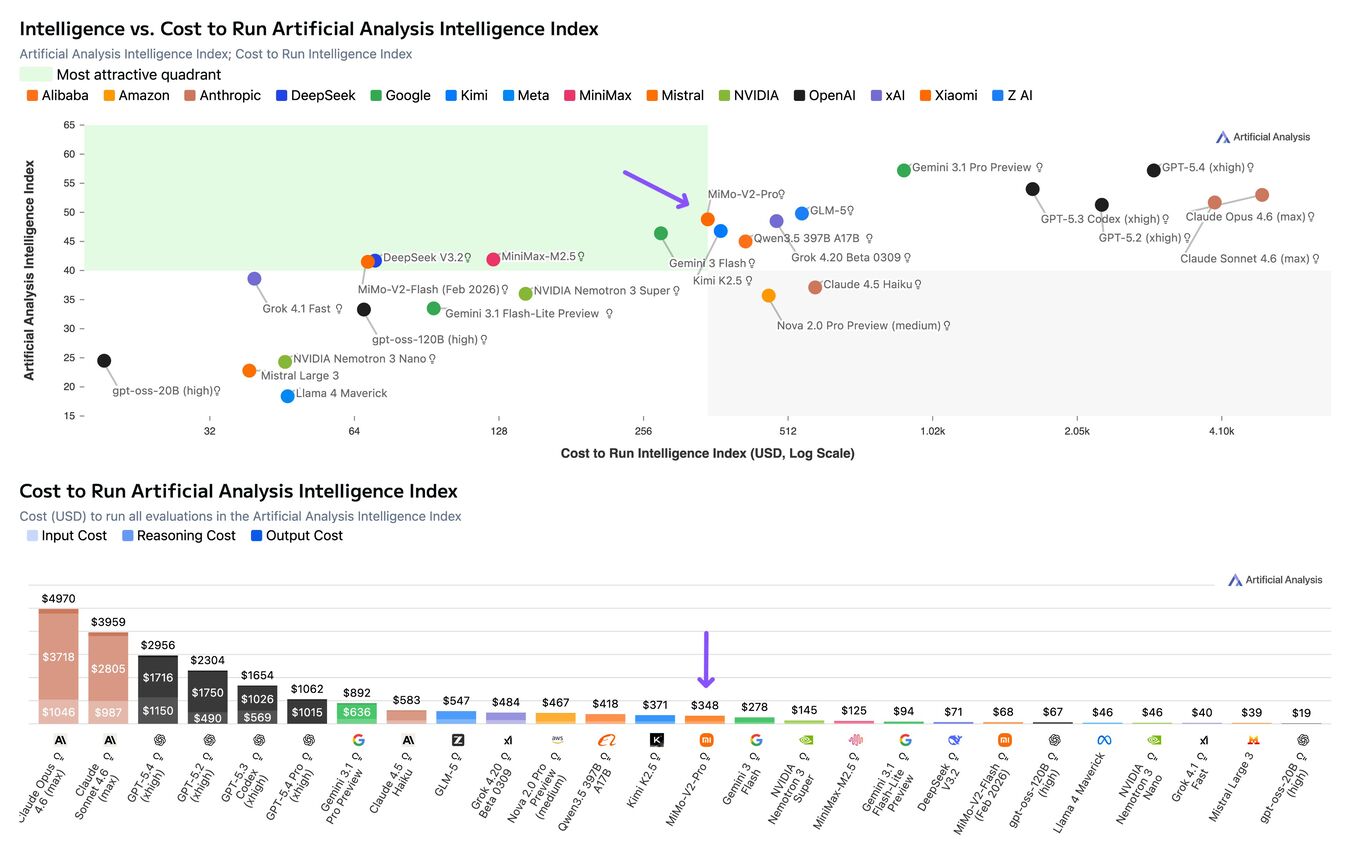

The AI discourse right now is fixated on two things: model improvements and agent frameworks. Which model is best. Which orchestration layer is cleanest. How many tools can you wire into a loop. How many agents can you run in parallel? Every week my X feed is filled with a new harness, a new benchmark, a new claim about reasoning.

None of it matters as much as the data.

The models are converging. A leaked Google memo called it in 2023: “We Have No Moat, And Neither Does OpenAI.” Since then, Qwen, DeepSeek, and Llama have all closed the gap. James Betker at OpenAI said it best:

“Trained on the same dataset for long enough, pretty much every model with enough weights and training time converges to the same point. Model behavior is not determined by architecture, hyperparameters, or optimizer choices. It’s determined by your dataset, nothing else.”

If the models are converging, the harnesses are right behind them. LangChain, CrewAI, AutoGen. The third-party frameworks barely had time to establish themselves before the model providers started absorbing the pattern. OpenAI shipped a built-in Agents SDK with tool use and guardrails baked into their API. Anthropic built agentic tooling directly into Claude. The orchestration layer that was a startup category 18 months ago became a feature checkbox for the big labs before the rest of us could catch up.

Then the open-source community compressed the whole cycle into a month. OpenClaw brought agentic computer use to the desktop and half of X went out and bought $800 Mac Minis to run a CPU load a Pi4 could handle, while still paying for cloud inference. Hermes Agent from Nous Research dropped days later with better memory and a cleaner architecture. For about a week the discourse was about which open-source harness was better. Then Claude shipped the same functionality straight through the GUI. 16 million views in 4 hours. The entire agent harness category dissolved into another product update. If your competitive advantage is your model or your harness, you’re standing on ground that erodes weekly.

None of that matters if you don’t have anything worth pointing it at.

The datasets that actually move the needle in cancer research weren’t built by AI companies. TCGA took thousands of researchers, 33 cancer types, and over a decade of coordinated sequencing. GTEx cataloged gene expression across 54 human tissues from nearly 1,000 donors. CCLE profiled over 1,000 cancer cell lines to connect genomic features to drug response. The Human Genome Project took 13 years and $2.7 billion. The bottleneck was never compute. It was cells to look at, machines to sequence them, and people who knew how to turn raw reads into a clean FASTQ file and put it somewhere useful.

An agent can query all of this. It can’t produce any of it. New data, and knowing where and how to collect it, is the moat in biology. The same is true everywhere else. The operations team with 5 years of structured incident data didn’t download that from Hugging Face. The law firm with 20 years of case outcomes mapped to strategy didn’t fine-tune their way to it. That data was built by people with domain knowledge, over time, and no model update makes it obsolete.

For engineers, the data is what you know.

If you’re an infrastructure engineer, your moat isn’t the datasets you work with. It’s the accumulated knowledge of how production systems actually behave. Knowing that the agent crashed because it hit a connection pool limit, not because the prompt was wrong. Knowing the database dropped its user permissions in the last automated update and that’s why it’s failing to connect to the agent. Knowing the bottleneck is data ingress latency, not inference speed. Knowing that the deployment pipeline silently dropped a migration and that’s why embeddings are stale.

None of this lives in a model. It lives in the engineer who has been debugging data pipelines long enough to have intuition about where they break. That intuition is the thing that turns a demo into a production system.

I’ve watched the same pattern on every project I’ve touched. The agentic layer gets all the attention. The data infrastructure underneath it gets none. And when the agent fails in production, it’s almost never a model problem. It’s a data problem: missing fields, stale embeddings, broken joins, schema drift, permissions that worked in dev but not in prod. The engineer who can diagnose that in 20 minutes instead of 2 days is the actual moat.

A cancer biologist who can write SQL is worth more to an oncology AI platform than a prompt engineer who can’t interpret a gene expression matrix. A DevOps engineer who understands why the production deployment is failing at 3am is worth more than a new agent harness. The people who understand the domain, who can read the data, who know what questions to ask and what the answers actually mean… they’re the ones generating value while the market circle- jerks invests in its own hype.

Most AI startups right now are thin wrappers around an API call. I’ve been there and I’ve tried that. The business model doesn’t work.

The models will keep getting smarter. The harnesses will keep getting absorbed. The agents will keep getting more capable. All of that is converging toward commodity. The thing that doesn’t converge is the understanding of what to point them at, what the data means, why the system broke, and what to build next. That knowledge comes from years of working inside a domain, not from a model trained on the internet’s average understanding of it.

Data is the moat. Everything else is a feature.

Reach me on X if you have questions or want to compare notes.